Assistant Professor - Digital Future Lab - Hasselt University

Research and Demos

|

Large-area Spatially Aligned Anchors (LASAA)

[GitHub standalone]

|

|

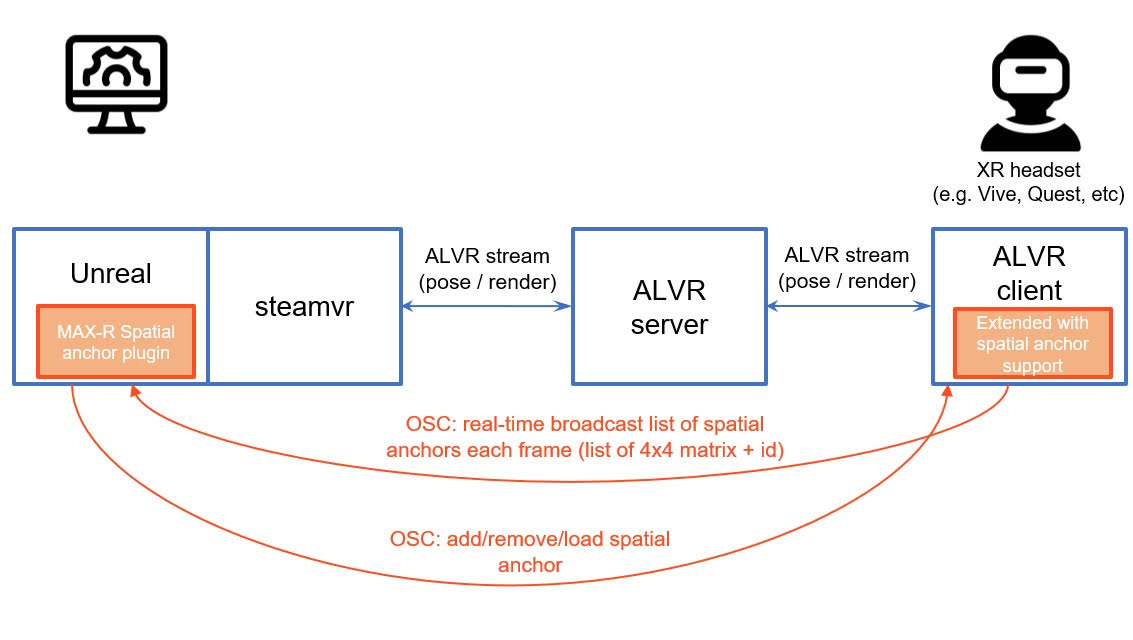

ALVR version for Large-area Spatially Aligned Anchors (LASAA-ALVR)

[Github for custom ALVR client] [Github for UE5 plugin]

|

|

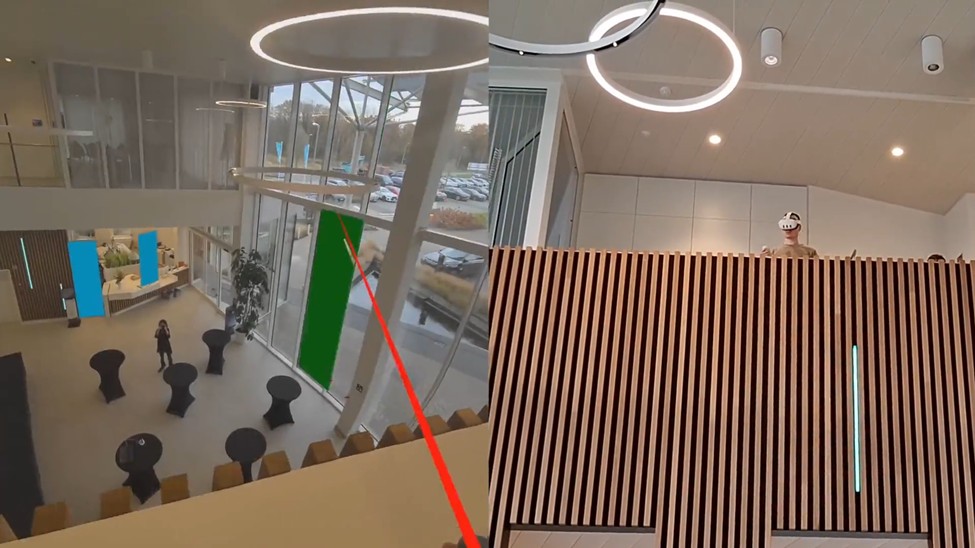

Stereopsia Demo 2024

We had a great experience at Stereopsia 2024, where we showcased our demo on large-area alignment of digital content for XR, developed and integrated within the MAX-R Project. With this technology, you can get out of your VR seat and start roaming large buildings. A big thank you to our fantastic partner, CREW Brussels, for their support!

|

|

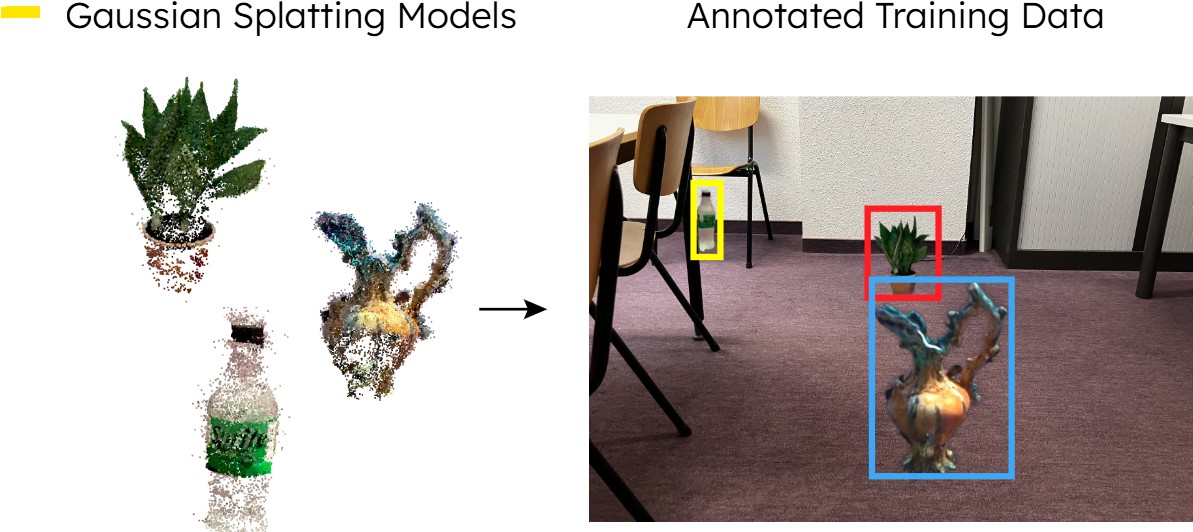

Cut-and-Splat: Leveraging Gaussian Splatting for Synthetic Data Generation

This is the code for our ROBOVIS paper Cut-and-Splat: Leveraging Gaussian Splatting for Synthetic Data Generation.

[Github]

|

|

DigiBuild

Results from the VLAIO COOCK+ project DigiBuild (Digital Support for Construction Industrialization via Off-site Production) and the Horizon-Europe project MAX-R (Mixed Augmented and Extended Reality Media Pipeline)

|

|

VR Belevingspad (POM Limburg)

In this demo, our goal is to offer people a hyper-realistic, immersive experience. We've now explored a new technology that allows us to expand the freedom of movement while maintaining the photorealism of videos. Users can move forward, backward, and side to side within a photorealistic environment. Combined with a motion platform, it truly feels like you are walking through a large, real-world space. In collaboration with POM Limburg and Gijs Van Vaerenbergh, we applied this technology in a wonderful setting, giving users the sensation of walking along a path in a real forest. This is a major leap forward in immersive experiences, bringing VR closer to true exploration!

[Link to POM Limburg]

|

|

|

|

Genetic Learning for Designing Sim-to-Real Data Augmentations

This repository contains the implementation of the GeneticAugment algorithm. The algorithm is designed to automatically find good data augmentation strategies for training on synthetic data. Given an unlabeled synthetic dataset and unlabeled real dataset the algorithm leverages genetic learning to find image augmentations to use on the synthetic data to improve generalization on real data. The genetic learning is steered by two metrics that increase the variation in the augmented synthetic images and decrease the distance between the augmented images and the real images.

[Github] [Paper] |

|

CAD2Render (PILS SBO)

CAD2Render is a highly customizable framework for generating high quality synthetic data for deep learning purposes. It is build upon the high definition rendering pipeline of unity for high quality raytracing with full global illumination. The framework supports variations in model types, number of models, environments, viewpoints, exposure, supporting structures, materials, material colors, etc. CAD2Render is developed in the context of the Flanders Make PILS SBO project.

[Github to CAD2Render]

|

|

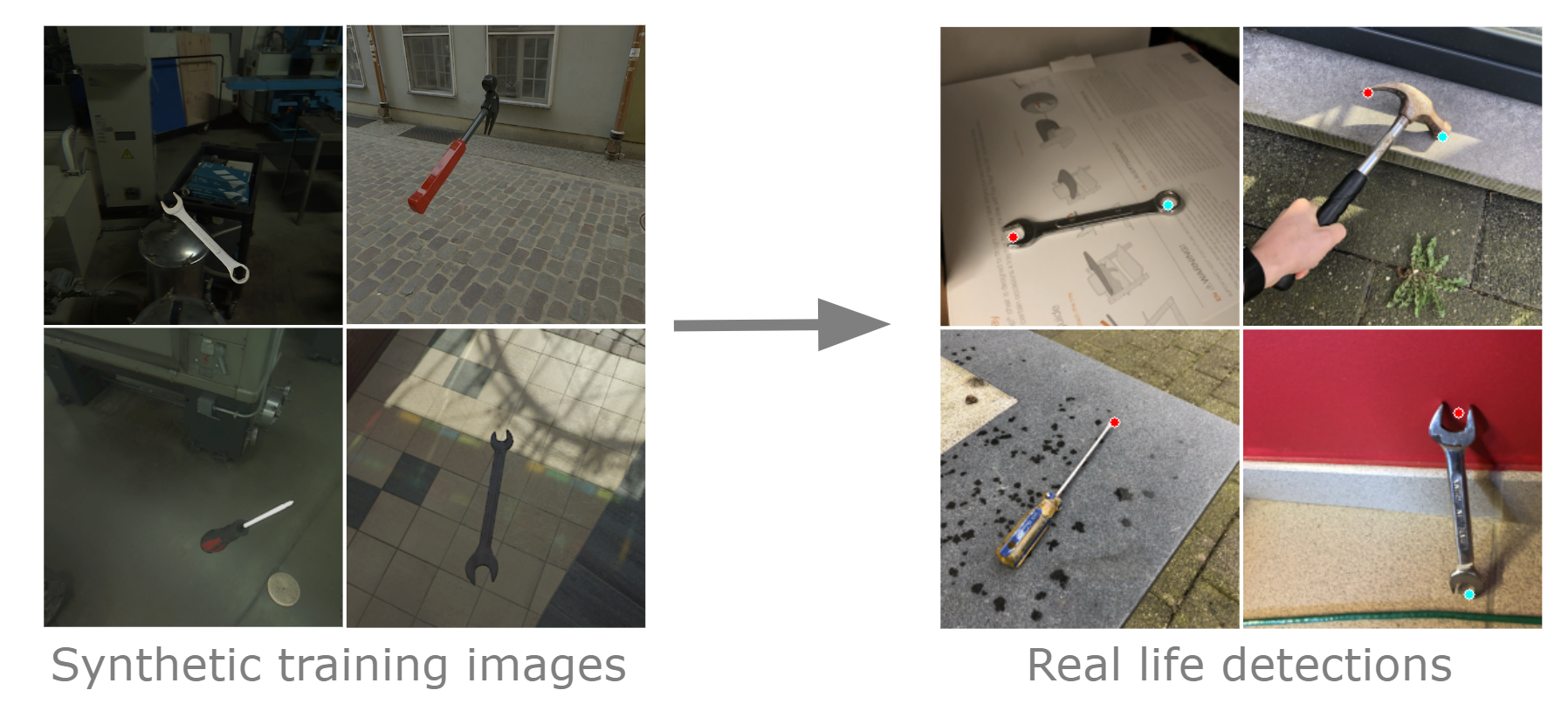

Tool Keypoint Detection (PILS SBO and FAMAR ICON)

The overall project goal is to create an economically feasible user-centred Augmented Reality application methodology for flexible assembly and inspection operations in a low volume/high mix manufacturing environment. To enable this, object and keypoint detection algorithms were developed to facilitate state tracking.

[Full paper] [Dataset]

|

|

Wetenschapsdag 2023 UHasselt

Interactieve Virtuele Productie.

|

|

Dataset of Industrial Metal Objects

We present a diverse dataset of industrial metal objects. These objects are symmetric, textureless and highly reflective, leading to challenging conditions not captured in existing datasets. Our 6D object pose estimation dataset contains both real-world and synthetic images. Real-world data is obtained by recording multi-view images of scenes with varying object shapes, materials, carriers, compositions and lighting conditions. This leads to over 30,000 images, accurately labelled using a new public tool. Synthetic data is obtained by carefully simulating real-world conditions and varying them in a controlled and realistic way. This leads to over 500,000 synthetic images. The close correspondence between synthetic and real-world data, and controlled variations, will facilitate sim-to-real research. Our dataset's size and challenging nature will facilitate research on various computer vision tasks involving reflective materials.

[Dataset website] |

|

DIMO Object Detection Analysis

We provide tools to analyse aspects of generating synthetic data as well as techniques used to train the models. We do so by devising a number of experiments, training models on the Dataset of Industrial Metal Objects (DIMO). This dataset contains both real and synthetic images. The synthetic part has different subsets that are either exact synthetic copies of the real data or are copies with certain aspects randomised. This allows us to analyse what types of variation are good for synthetic training data and which aspects should be modelled to closely match the target data. Furthermore, we investigate what types of training techniques are beneficial towards generalisation to real data, and how to use them. Additionally, we analyse how real images can be leveraged when training on synthetic images. All these experiments are validated on real data and benchmarked to models trained on real data. The results offer a number of interesting takeaways that can serve as basic guidelines for using synthetic data for object detection.

[Github code] [Conference Website] |

|

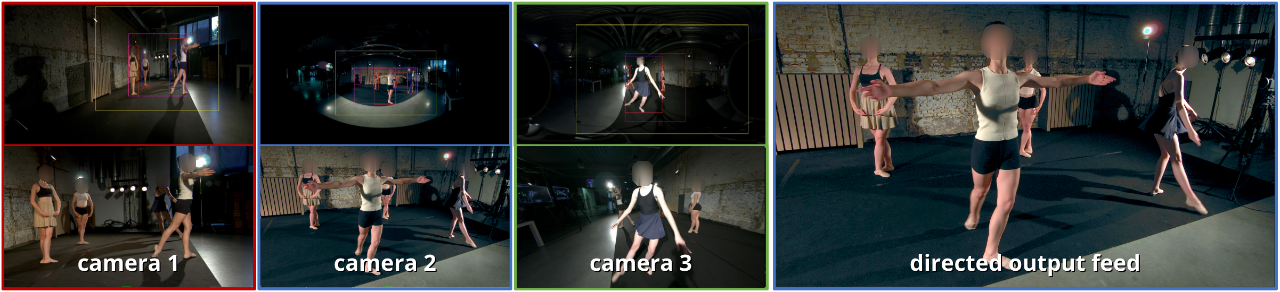

OmniDrone (FWO SBO)

apturing an event from multiple camera angles can give a viewer the most complete and interesting picture of that event. To be suitable for broadcasting, a human director needs to decide what to show at each point in time. This can become cumbersome with an increasing number of camera angles. The introduction of omnidirectional or wide-angle cameras has allowed for events to be captured more completely, making it even more difficult for the director to pick a good shot. In this paper, a system is presented that, given multiple ultra-high resolution video streams of an event, can generate a visually pleasing sequence of shots that manages to follow the relevant action of an event. Due to the algorithm being general purpose, it can be applied to most scenarios that feature humans. The proposed method allows for online processing when real-time broadcasting is required, as well as offline processing when the quality of the camera operation is the priority. Object detection is used to detect humans and other objects of interest in the input streams. Detected persons of interest, along with a set of rules based on cinematic conventions, are used to determine which video stream to show and what part of that stream is virtually framed. The user can provide a number of settings that determine how these rules are interpreted. The system is able to handle input from different wide-angle video streams by removing lens distortions. Using a user study it is shown, for a number of different scenarios, that the proposed automated director is able to capture an event with aesthetically pleasing video compositions and human-like shot switching behavior.

|